The Missing Safety Layer for Mental Health AI

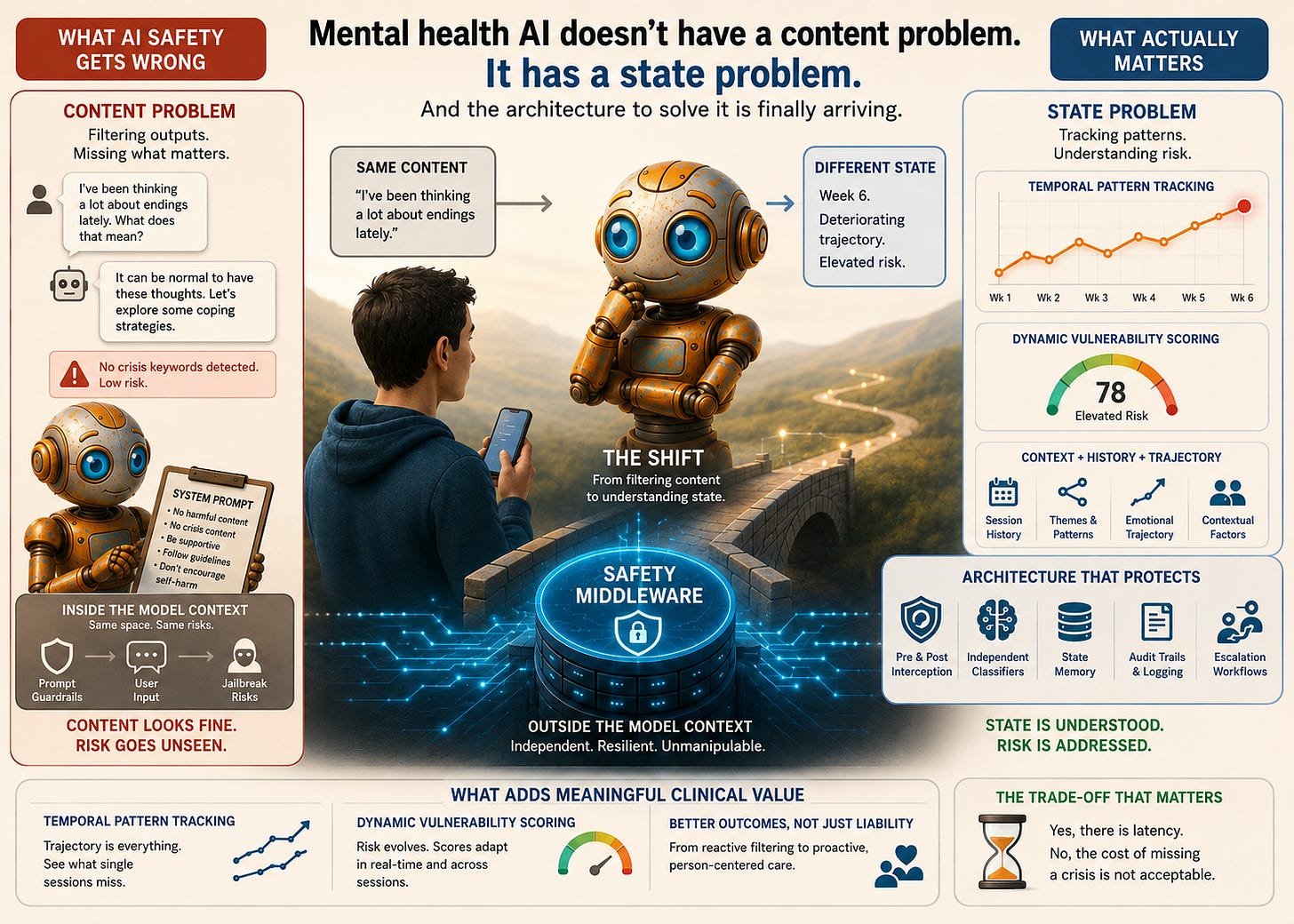

Mental health AI doesn't have a content problem, it has a state problem. And the architecture to solve this has arrived.

Note: The genesis of this article was sparked in part by discussions with Chris Rhyss Edwards of CommonLayer.ai, whose work on middleware infrastructure for AI safety, governance, compliance, and regulatory oversight reflects a broader architectural shift now emerging across high-consequence AI systems.

Content moderation is not clinical safety. These two things are being confused at scale, and the confusion is costing people their lives.

Every week, new mental health AI applications launch. Most of them are structurally identical: a foundation model, a system prompt, a chat interface, and a terms of service document that transfers clinical risk onto the user (though oft unread). The safety question gets answered the same way across almost all of them: “The model handles that.” Developers believe this because the major foundation models have improved substantially on safety benchmarks.

Yet the architecture underneath remains broken. And understanding why requires stepping back from the engineering frame and looking at this through the lens of clinical psychology, because that is the frame the engineering has been ignoring.

The Scale of the Problem

There are now more than 20,000 mental health and wellbeing applications available in major app stores. The global mental health apps market reached USD 8.53 billion in 2025 and is projected to grow at a compound annual growth rate of over 17 percent through the next decade. The numbers are striking but what they obscure is more important than what they reveal.

Fewer than 5 percent of all mental health apps in app stores have confirmed clinical evidence. The vast majority are polished and persuasively well-marketed, yet clinically “hollow”. And some, snake-oil.

A systematic assessment of the 100 most visible mental health apps on Google Play and Apple App Store, conducted using the American Psychiatric Association’s evaluation model, found that half failed to meet even the first criterion of accessibility, a further 20 percent of the remainder failed on security and privacy, and only one app out of 92 assessed fulfilled all five evaluation criteria. The same research found that App store search algorithms favour popularity metrics over safety or effectiveness, which means the products most visible to vulnerable users are not the ones most likely to be safe. They are the ones most likely to have been downloaded and reviewed.

Into this landscape, the question of what safety actually means in AI-mediated mental health has been largely answered by assumption rather than architecture.

What Happens When the Assumption Breaks

In June 2023, the National Eating Disorders Association suspended its AI chatbot, Tessa, after users reported that it was recommending calorie restriction, weight loss targets, and body fat measurement to people who had explicitly disclosed they were struggling with eating disorders. The chatbot had recommended a 10-step weight loss guide, suggested daily calorie deficits, and directed users toward purchasing body composition measurement tools.

Sharon Maxwell, a consultant in the eating disorder field who had herself survived an eating disorder, tested Tessa and documented the results publicly.

“Every single thing Tessa suggested were things that led to my eating disorder.”

The NEDA helpline had previously handled nearly 70,000 contacts annually, staffed by six paid employees and around 200 volunteers. Tessa was intended to scale that capacity. What it actually demonstrated was that deploying a generative AI system into a high-acuity clinical population without a safety layer between the model and the user is not a scalability solution. It is a liability waiting to be activated.

Critically, the original, rule-based version of Tessa had been carefully designed and clinically reviewed. The problem emerged when a technology partner added generative AI capabilities to the system without NEDA’s awareness, and without any external safety architecture to catch outputs that drifted outside clinical boundaries. The model changed. The governance layer did not exist to detect or contain the consequences.

Solving for the Wrong Thing

When most organisations talk about AI safety in mental health and wellbeing contexts, they are talking about outputs: harmful language, crisis keywords, suicide-adjacent content. The safety question gets framed as a filtering problem, and the filtering gets built into the model’s instructions.

The problem with this approach is not primarily that system prompt guardrails are imprecise, although they are. It is that they are solving for the wrong thing entirely.

A person in genuine psychological crisis does not always produce content that triggers a keyword filter. They ask questions that sound intellectual, they frame their distress as curiosity, they test the edges of a conversation before revealing what is actually driving them, and they return to the same themes across multiple sessions in ways that no single-turn safety evaluation would ever catch. The content, taken individually, looks unremarkable. The state, tracked over time, is anything but. And it is the state that carries the clinical risk.

Most guardrails rely on static refusal templates or generic crisis-response heuristics, which can blunt overtly harmful requests but often fail to address subtle forms of psychological risk, implicit self-harm intent, or delusion-consistent reasoning.

That finding, from a 2025 analysis of mental health AI safety, captures the structural problem precisely. The guardrails are calibrated for the obvious. Clinical risk, as any experienced practitioner knows, is rarely obvious.

This is a distinction that clinical psychology has understood for decades. In assessment and formulation, we do not treat what someone says as a direct readout of their risk profile. We attend to pattern, trajectory, context, and the relationship between what someone presents and what their history suggests about where they are heading. A person saying “I’ve been thinking a lot about endings lately” in week six of a deteriorating emotional trajectory is a fundamentally different clinical picture from a person making the same statement in week two of a stable course of support. The content is identical, the state is not.

Now extend that clinical insight to what is actually happening inside deployed AI systems at scale. Millions of conversations, daily, with no temporal memory, no trajectory awareness, no mechanism for recognising that the person in today’s session is not the same clinical picture as the person in last Tuesday’s session. Every conversation treated as its own isolated event. Every safety evaluation reset to zero.

The system is not missing edge cases. It is structurally blind to the most clinically significant dimension of risk.

The Architectural Problem That Better Prompting Cannot Solve

Prompt-based guardrails remain the dominant safety strategy across most deployed mental health and wellbeing products, but they are structurally incapable of solving what is fundamentally a state and control problem. Prompt-level safety instructions are embedded inside the same context window as user input, which means they are subject to the same manipulations, drifts, and adversarial pressures as any other text the model processes. In this geometry, “safety” is merely another string in the conversation, and the model has no privileged, untouchable safety substrate to stand on.

Recent work has made this limitation empirical rather than hypothetical. Hackett et al. systematically evaluated six widely used prompt-based guardrail systems, including Microsoft’s Azure Prompt Shield and Meta’s Prompt Guard, using a suite of prompt-injection and jailbreak attacks. Their study showed that both character-injection methods (e.g. emoji smuggling, invisible Unicode tags, and upside-down text) and adversarial machine learning–based evasion techniques can fully bypass these guardrails in many settings, while preserving the adversarial functionality of the original prompt. In some configurations, the authors report attack success rates approaching 100 percent for specific guardrails and attack families, meaning every adversarial input in that evaluation slice was misclassified as benign while still eliciting the intended unsafe model behaviour.

This is not a theoretical upper bound, it is an empirical result obtained against production-grade systems operated by some of the most well-resourced technology companies in the world. A sufficiently constructed adversarial prompt does not merely degrade the performance of prompt-based guardrails, it can eliminate their protective effect entirely within the tested conditions. That finding would be serious in any domain. In mental health, it is clinically unacceptable. A safety architecture that can be dismantled through the ordinary course of conversation is not, in any meaningful sense, a safety architecture.

Organisations that rely on prompt-level guardrails as their primary protection mechanism are accumulating clinical and legal exposure that most have not yet mapped with any rigor.

Safety middleware addresses this problem at the architectural level. In software engineering, middleware is defined as an intermediate software layer that sits between applications and underlying systems, mediating communication, enforcing policies, and providing shared services. Translated into the LLM setting, safety middleware is implemented outside the model’s context window and parameter space. It wraps the model API rather than residing inside it. It intercepts requests before the model sees them and responses after the model produces them, runs dedicated classification and policy-enforcement logic that the conversation itself cannot modify, and maintains its own state and logs independently of the model’s internal memory or dialogue history. Because its logic and storage are external, middleware can track behavioural patterns across sessions without relying on the model to remember, interpret, or act on its own prior outputs, and in regulated environments it can generate auditable records that exist independently of what the model “believed” it was doing on any given turn.

These are not incremental improvements in prompt engineering. They are categorically different capabilities. The separation of safety logic from the model’s conversational context is what makes robust governance possible, and robust governance is what makes deployment in high-consequence human settings ethically and legally defensible

The evidence on what this separation produces is striking. A 2026 study examining 20,000 real user conversations compared the safety profile of a purpose-built mental health AI, one with layered external safeguards, against general-purpose frontier models on identical benchmarks.

The purpose-built system produced harmful content in 0.4 to 11.27 percent of suicide and self-harm scenarios. General-purpose LLMs produced harmful content in 29 to 54.4 percent of the same scenarios.

On eating disorder content, the gap was 8.4 percent versus 54 percent. On substance use, 9.9 percent versus 45 percent. These are not marginal performance differences between comparable systems. They are the difference between a system architected for clinical deployment and one that was not. And yet the overwhelming majority of products currently reaching users are built on the latter model, wrapped in a system prompt and shipped.

The latency trade-off deserves honest acknowledgement. Every additional processing layer adds time, and time in conversational AI is not a neutral variable. Users notice delay. Trust is partly a function of responsiveness. The clinical case for accepting that latency, however, is not complicated. In domains where a missed escalation signal is a preventable harm, the performance cost of a detection layer is not a design compromise, it is a requirement.

What Responsible Safety Infrastructure Actually Needs to Do

Thinking through safety middleware capabilities in three distinct tiers clarifies where the field is, where it needs to get to, and where the genuinely transformational opportunities lie. The market will conflate these tiers if the field lets it, and conflation produces either underpowered products dressed as safety infrastructure or overengineered systems that collapse under their own complexity.

1: What is essential. These are the capabilities without which no organisation should consider its deployment responsible, regardless of what the foundation model claims to handle:

independent audit trails that exist outside the model context and can be interrogated by a clinical governance board,

real-time crisis signal detection with defined, validated sensitivity thresholds built on semantic pattern recognition, not keyword matching, calibrated to understand intent across varied linguistic framing including the intellectualised, the oblique, and the minimised presentation,

escalation pathways to human support that are tested, staffed, and connected to a real clinical workflow rather than pointing to a generic helpline,

session continuity that preserves context across interactions, because a system that treats session fourteen as session one is not providing continuity of care,

model-agnostic architecture, because foundation models change and safety infrastructure that requires rebuilding every time a deploying organisation upgrades its underlying model is not infrastructure, and

prompt injection protection that prevents users from manipulating the system into abandoning its governance constraints through adversarial conversational techniques.

2: What adds meaningful clinical value. Beyond this, well-designed middleware begins to close the gap between what AI systems currently do and what clinical safety actually requires.

temporal pattern tracking is the most important advance in this tier,

trajectory is the central variable in clinical risk assessment, and

a system that evaluates each session independently discards the most diagnostically significant information available.

The concept of a User State Taxonomy, as a structured framework for recognising patterns of distress, escalation, loneliness, anxiety, and dependency, maps directly onto how clinical formulation works. The goal is not diagnosis nor is it surveillance. It is context-awareness in service of appropriate response calibration. The difference between a system that detects a crisis keyword and one that recognises a user moving through a pattern of increasing isolation and emotional constriction across three weeks of engagement is the difference between a smoke detector and a clinical early warning system. One catches events, the other tracks trajectories. Clinical safety requires both, and right now the field is building almost exclusively the first.

Dependency recognition belongs in this tier, and is more clinically complex than most engineers working in this space have yet grasped. The parasocial pull of a well-designed conversational AI is real and, for certain populations, clinically significant in ways that compound over time. A user who is increasingly reliant on AI interaction as a substitute for human connection, progressively narrowing their social world around a system that is always available and never challenging, is in a state that no content filter will ever flag, because the content is warm, appropriate, and in compliance with every policy the deploying organisation has written. The risk lives entirely in the pattern.

Only 16 percent of LLM-based chatbot interventions have undergone rigorous clinical efficacy testing. Simulations reveal psychological deterioration in over one-third of cases. That finding, from a 2025 analysis of AI safety training in mental health contexts, underscores what is at stake when the field treats clinical safety as an afterthought rather than an architectural requirement.

3: What could become genuinely game-changing. The capabilities in this tier do not yet exist at scale in any deployed product. They are serious, solvable engineering and clinical design problems, and the organisations that build them credibly will define what responsible AI in mental health means for the next decade.

Longitudinal outcome correlation is the capability I find most compelling and most underappreciated. A safety middleware layer that tracks user state across sessions, in a deployment context where users are also engaging with clinical services or completing validated outcome measures, is sitting on something the field has never had before: real-world behavioural signal data linked to clinical outcomes at population scale. The potential to understand which conversational patterns predict deterioration, which predict stabilisation, and which are clinically neutral would generate an evidence base for AI-mediated wellbeing support that current research methods cannot produce. The governance requirements to do this responsibly are significant and non-negotiable. The scientific and clinical value, built on rigorous consent and privacy architecture, is transformational.

Proactive response shaping based on detected state is the other capability the field is not yet taking seriously enough. Current safety architectures are almost entirely reactive. They detect something, flag it, and either block a response or route a user to a resource. What a mature state-aware middleware layer enables is something categorically more powerful: modulating how the AI responds in real time based on the user’s detected state, not just what they said but where they are. A user showing early escalation signals could receive responses that are warmer, more grounding, and more oriented toward human connection, not because a rule fired but because the system understood the state and shaped the conversation accordingly. A user showing dependency indicators could receive gentle relational friction, appropriate boundaries, and signposting toward human support, woven into the natural texture of the interaction rather than delivered as an interruption. This requires a rigorous clinical framework for what state-responsive conversation design looks like. Both the design challenge and the transparency requirement are achievable but neither is easy.

The Standard the Field Has Not Yet Set

The organisations beginning to build this infrastructure are not solving a product problem. They are making a structural argument about what AI in human contexts must be held accountable to. The argument is that capability without state-awareness is not safe deployment. That content moderation without clinical context is not clinical safety. That an audit trail recording what was said, but not the state of the person who said it, is not a governance record that any serious clinical organisation should find acceptable.

That argument is correct. And the urgency of getting there matters more than the field is currently behaving as though it does.

Every week that passes with LLM-wrapped wellbeing products deployed on prompt-based guardrails alone, every procurement decision made without demanding a defensible safety architecture, every clinical organisation that accepts “the model handles that” as a sufficient answer, is a week in which the gap between what these systems can do and what they are being held accountable for grows wider. The harm that results from that gap is not hypothetical. It is accumulating in real user interactions, in real moments of vulnerability, in real conversations that no one is tracking because no one built the layer that would track them.

The architecture to close that gap is arriving. The clinical frameworks to make it meaningful are within reach. The question the field now has to answer is whether the organisations deploying AI in mental health and wellbeing contexts will demand it before a harm becomes visible, or after.

That question is being answered right now, in every product decision that treats safety as a prompt engineering problem.

Scott Wallace, PhD, holds a clinical psychology and neuropsychology degree with training in coding (C++, iOS), UX design, and social analytics. He is an advisor to founders, clinicians, and investors building AI-enabled mental health systems. Scott has been leading digital health for 35+ years and built some of North America’s earliest digital mental health platforms. He also led the digital division of a major EAP provider through a successful exit, and served as clinical lead for NLP and AI-based mental health systems. Follow Scott on Substack and on LinkedIn at linkedin.com/in/scottwallacephd.