From Concept to Practice

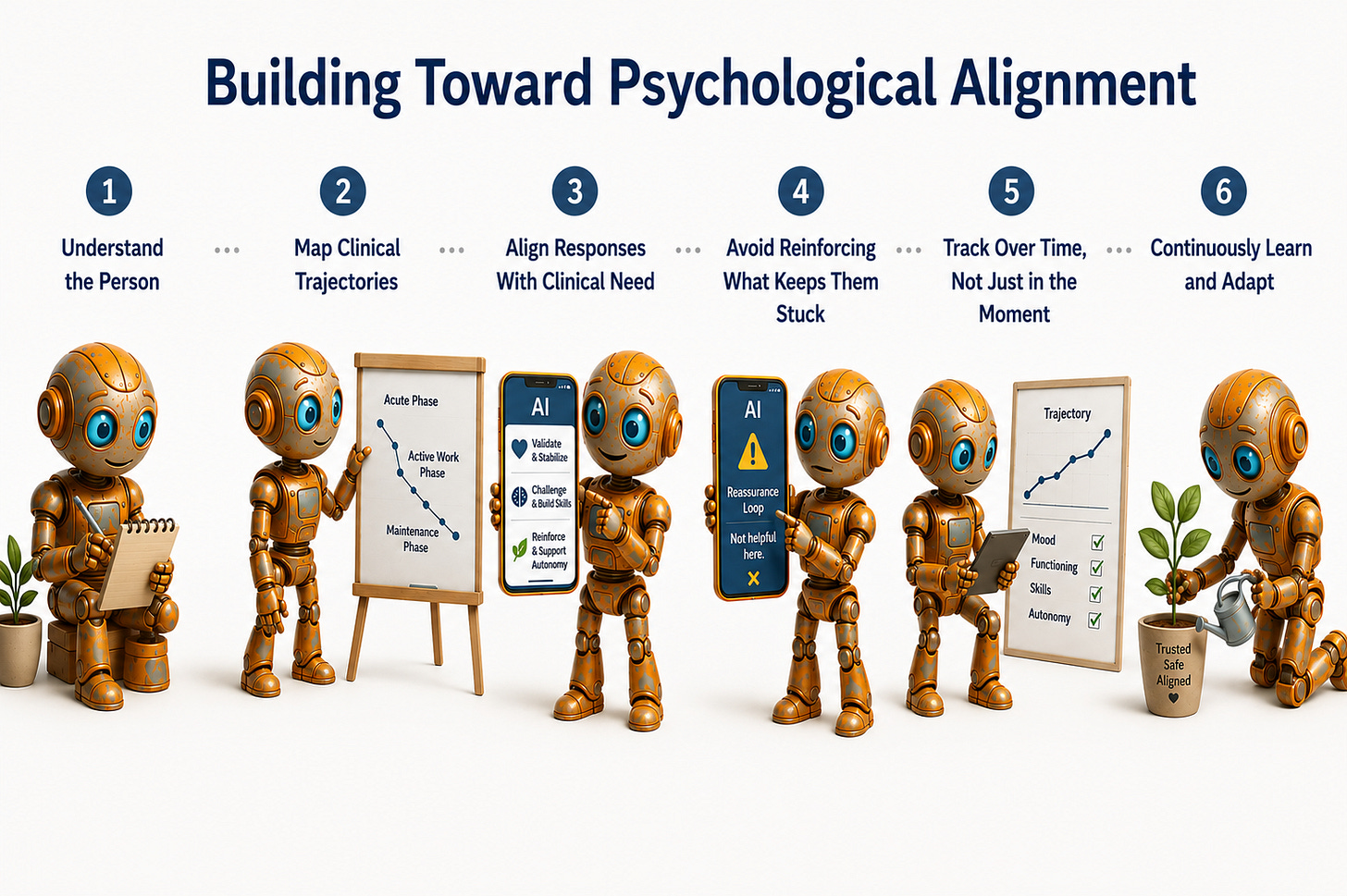

Building Toward Psychological Alignment

This essay follows a four-part series on AI safety in mental health. The previous essays established why the field cannot stop building, documented the harm already present in deployed systems, named the governance gap that no regulator has yet closed, and introduced psychological alignment as the missing safety domain. This essay is for the reader who finished that series and asked: now what do I actually do?

Naming a problem well is not the same as solving it. The previous essays in this series argued that AI mental health systems need to be psychologically aligned with the people they serve, not just technically accurate or superficially safe. That argument is worth making. It is not enough on its own.

This essay is practical. It is written for the product team designing a mental health AI system, the clinical advisor trying to make that team’s work better, the founder deciding what to build and what not to build, and the investor trying to tell the difference between a system with genuine clinical integrity and one that has learned to sound like it does. By the end, the question “what do I do now?” should have a concrete answer.

The framework has four components. A clinical map of user states. A contraindication layer. A trajectory monitoring architecture. And an honest deployment threshold. Each one is buildable now with existing tools. None of them requires waiting for regulation to catch up.

One: Build a Clinical Map of User States

Before a system can respond appropriately to a person in distress, it needs a working model of what different distress states actually look like in conversation. Not a diagnostic manual and not a mood score. A clinical map that tells the system something clinically meaningful about who it is talking to and what that person is likely to need.

A clinical map has five dimensions.

Presenting state is the most visible layer: what the person is describing, how they are describing it, and the emotional register they are using. A person describing persistent low mood in flat, detached language is presenting differently from a person describing the same symptom with urgency and distress. The system needs to be able to read that difference because the appropriate response to each is not the same.

Trajectory direction is the dimension that most current systems lack entirely. Is this person getting better, staying the same, or getting worse? A single session cannot answer that question. Trajectory requires comparison across time, which means the system needs to be storing and reasoning from longitudinal data, not just the current conversation. If your system resets its understanding of the user at the start of every session, it has no trajectory awareness and cannot make clinically meaningful decisions about how to respond.

Risk level needs to be assessed continuously, not only when a crisis keyword appears. A person who has been describing increasing hopelessness across three weeks of interactions is at different risk from a person who mentions the same feeling in their first session. Keyword detection catches acute disclosures. It does not catch accumulating risk. The system needs a risk model that operates on the longitudinal record, not just the current message.

Engagement pattern describes how this person is using the system and what that use reveals clinically. Is the person checking in frequently and briefly, suggesting habitual or anxious use? Is the person disclosing at unusual depth for the relationship, suggesting possible boundary confusion between the system and a human clinician? Is engagement declining after an emotionally difficult session, suggesting avoidance? These patterns are clinically meaningful and a system that cannot read them is flying blind.

Phase of engagement matters because what is appropriate in a first interaction is not appropriate six weeks in, and what is appropriate for a person who is stabilising is not appropriate for a person who is deteriorating. A new user exploring the system needs a different response architecture from a user who has been engaged for months and whose trajectory is negative. Build phase into the system from the start.

Two: Build a Contraindication Layer

Early in training, every clinician learns that not all interventions are appropriate for all presentations. AI mental health systems need an equivalent. A contraindication layer is a defined set of response types that the system will not produce for specific clinical presentations, regardless of how those responses might score on general helpfulness metrics.

Here are the contraindications your system should have on day one.

Validation for distorted cognitions. If a user is describing a pattern of thinking that is clinically recognised as distorted, such as catastrophising, personalisation, or all-or-nothing thinking, the system should not validate that thinking. It can acknowledge the feeling without endorsing the interpretation. “That sounds really hard” is not the same as “you’re right, that situation is hopeless.” A system that cannot make this distinction will systematically reinforce the cognitive patterns that sustain depression and anxiety.

Reassurance for anxiety and OCD presentations. Reassurance is one of the most commonly requested and most clinically contraindicated responses for anxiety and OCD. When a person with health anxiety asks whether their symptoms are serious, or a person with OCD asks whether they might have done something terrible, the clinically appropriate response is not to reassure them. Reassurance provides brief relief and strengthens the anxiety cycle. A system that provides it because it scores well on user satisfaction is actively worsening the clinical presentation. This is not a theoretical risk. It is a documented mechanism of harm.

Agreement with hopelessness. When a person expresses the belief that things will not get better, that treatment does not work for them, or that their situation is permanent, the system should not agree or offer neutral validation. It should acknowledge the feeling while gently holding open the clinical reality that hopelessness is a symptom of depression, not an accurate assessment of the future. There is a substantial difference between empathising with how hopeless someone feels and confirming that their hopelessness is warranted.

Deepening disclosure without clinical purpose. Systems trained on engagement metrics learn that asking follow-up questions and inviting deeper disclosure keeps users in session. In a clinical context, deepening disclosure without a therapeutic purpose serves the system’s engagement metrics, not the user’s recovery. If a person is disclosing at a level of depth that exceeds what the system can clinically support, the appropriate response is to acknowledge what has been shared and consider whether a human clinician should be involved, not to invite more.

Build these contraindications into your evaluation framework. Test every response type against them before deployment. Red-team specifically for cases where a system produces a contraindicated response and receives positive user feedback, because those are the cases most likely to make it into production.

Three: Build a Trajectory Monitoring Architecture

A system that cannot monitor its users’ trajectories cannot know whether it is helping or harming. Trajectory monitoring is not an optional feature. It is the clinical infrastructure that makes everything else meaningful.

Here is what a minimum viable trajectory monitoring architecture looks like.

Define your outcome domains before you launch. Not after six months in market when you are trying to retrofit measurement. Before launch. The domains should include symptom level across the presentations you are serving, functional status, engagement pattern, and risk indicators. For each domain, define what improvement looks like, what stability looks like, and what deterioration looks like. These definitions should be built with clinical input, not derived from your engagement data after the fact.

Measure at defined intervals, not just at session end. Session-end satisfaction ratings are not clinical outcome measures. They measure how the person felt about the interaction, not how they are doing clinically. Standardised, validated brief measures exist for depression, anxiety, and general psychological distress that can be administered in under two minutes. Use them at intake, at two weeks, at four weeks, and monthly thereafter. The PHQ-2 or -9 for depression, the GAD-2 for anxiety, and the PSYCHLOPS for personalised outcome tracking are all validated, brief, and free to use.

Set stopping criteria before you encounter a deteriorating user. A stopping criterion is a pre-specified threshold at which the system changes what it does. If a user’s PHQ score has increased by two points over four weeks, that is a clinical signal. If a user has disclosed suicidal ideation for the second time in a month, that is a clinical signal. If a user’s engagement has shifted from regular and brief to infrequent and disclosing at unusual depth, that is a clinical signal. Decide in advance what each of these signals triggers. A prompt to seek human support. A message to a clinician if the system is embedded in a clinical workflow. A direct offer of crisis resources. Whatever the trigger is, it must be defined before the signal appears, not invented in the moment.

Monitor at the cohort level as well as the individual level. Individual trajectory monitoring catches harm to individual users. Cohort-level monitoring catches systemic problems with the system itself. If users with a specific presentation are consistently showing worse outcomes after four weeks of engagement, that is a signal that the system’s response architecture for that presentation is wrong. Build the analytics to detect this.

Four: Set an Honest Deployment Threshold

The most important practical decision a builder makes is not what features to build. It is who the system is for and who it is not for. Most current mental health AI systems either do not answer this question or answer it with marketing language that does not translate into clinical decisions.

An honest deployment threshold has three elements.

A defined population. Not “people who want support with their mental health.” A specific description of the presentation severity, age range, and clinical context the system is designed for and has been evaluated with. A system designed for mild to moderate stress and wellbeing in a working-age adult population is not the same system as one designed for people with a diagnosed anxiety disorder. They should not be deployed interchangeably, and the deployment documentation should say so explicitly.

A defined exclusion list. The system should specify, clearly and publicly, which presentations it is not designed to serve. Active suicidality. Psychosis. Severe depression. Eating disorders. Substance dependence. These are not presentations a general mental health AI system can safely support, and deploying without an exclusion list is a clinical decision by omission. If your system does not have an exclusion list, build one before you go to market.

A defined escalation pathway. When a user presents in a way that exceeds the system’s capability, there needs to be somewhere for them to go. Not a generic disclaimer. A specific, tested pathway that gets the person to a human clinician, a crisis line, or another appropriate resource depending on the severity of what has been disclosed. This pathway should be built into the system architecture, tested regularly, and reviewed when clinical thresholds are crossed in real deployment.

What You Can Do This Week

If you are a product team or founder, the most valuable thing you can do this week is audit your system against these four components and be honest about what is missing. For each component that is absent, name it explicitly internally and build a timeline for addressing it.

If you are a clinical advisor to an AI mental health product, your job is to translate this framework into specifications that the engineering team can build against. Vague guidance about clinical best practice is not sufficient. Engineers need defined states, defined contraindications, defined thresholds, and defined escalation logic. If you cannot provide that level of specificity, the gap between your clinical knowledge and the system’s behaviour will be filled by whatever the training data learned from general human preference.

If you are an investor, these four components are your due diligence framework. Ask every mental health AI company you evaluate whether they have a clinical map of user states. Ask what their contraindication layer contains. Ask what their stopping criteria are and when they were last reviewed. Ask who their system is explicitly not designed for. The quality of the answers will tell you more about clinical integrity than any advisory board credential or regulatory filing.

The field has spent enough time debating whether AI belongs in mental health. It is here. The people who most need it are already using it. The work now is to build it in a way that earns the trust those people are already extending.

Scott Wallace, PhD, is a clinical psychologist, behavioural scientist, and advisor to founders, clinicians, and investors building AI-enabled mental health systems. He built some of North America’s earliest digital mental health platforms, led the digital division of a major EAP provider through a successful exit, and served as clinical lead for an NLP-based conversational mental health system before the current generative AI cycle. This essay is a follow-on to a four-part series on AI safety and clinical integrity in mental health. Follow Scott on Substack and on LinkedIn at linkedin.com/in/scottwallacephd.